A wearable behavioral aid...

Over 1 million children under the age of 17 in the US are on the autism spectrum. These children often times fail to recognize basic facial emotions, which make social interactions and developing friendships even more difficult to sustain. Gaining these skills requires intensive behavioral interventions that are often expensive, difficult to access, and inconsistently administered.

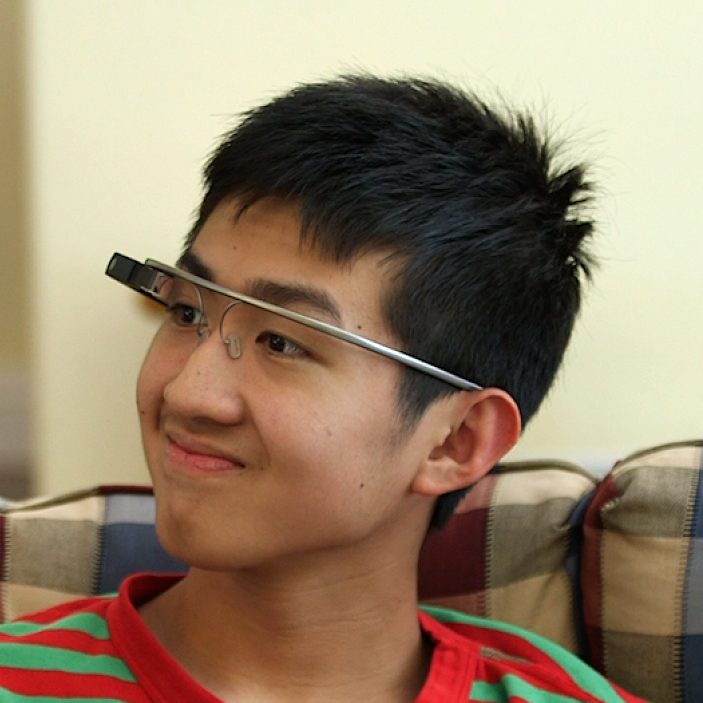

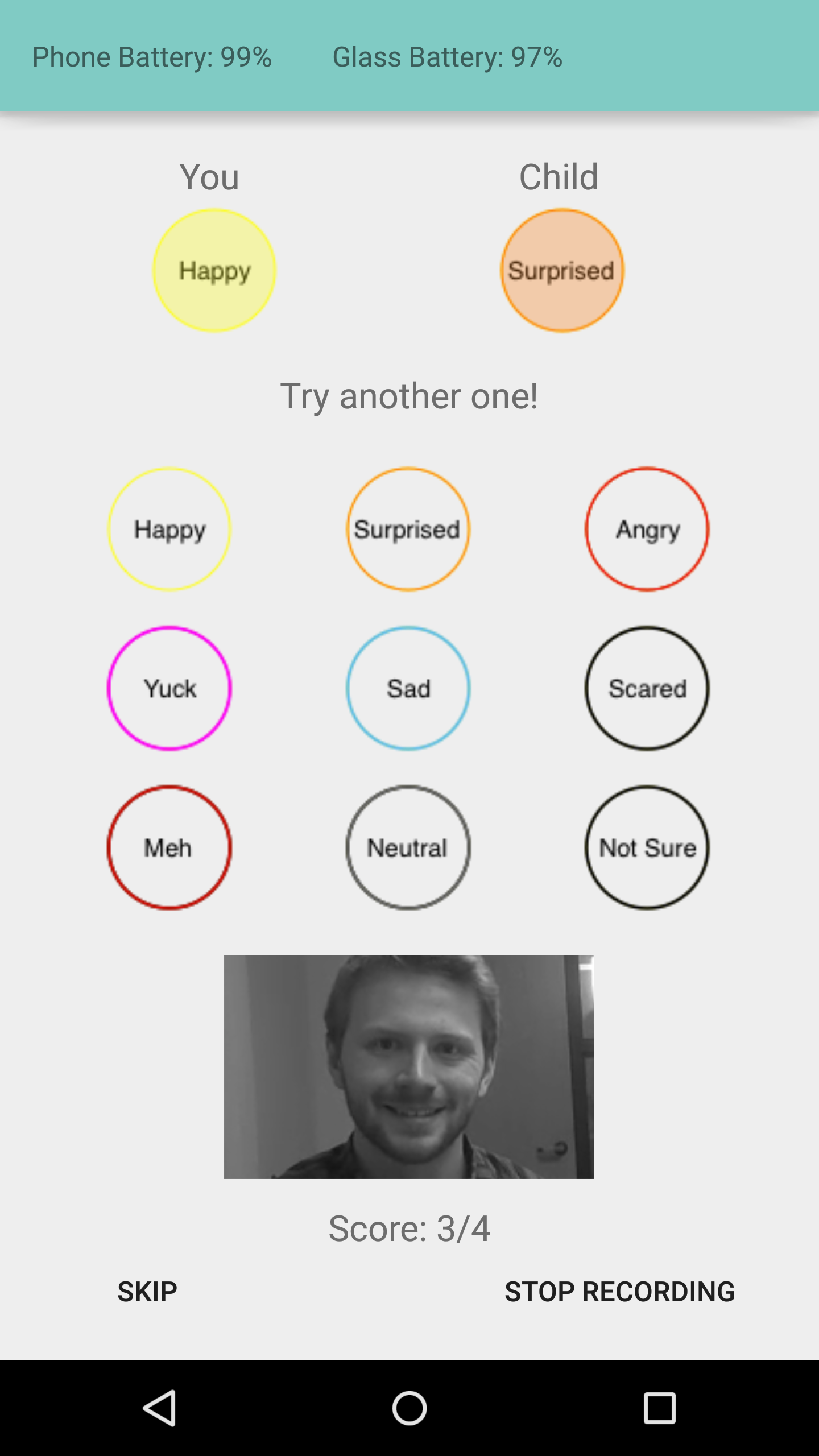

Our team at Stanford is researching a solution. We have developed a system using machine learning and artificial intelligence to automate facial expression recognition that runs on wearable glasses and delivers real-time social cues. Our novel system uses the outward-facing camera on the glasses to read facial expressions and provides social cues within the child’s natural environment. It also records the amount and type of eye contact, which adds an additional layer for behavioral intervention.

Help design the autism glass system!

We are currently refining our mobile, at-home therapeutic aid and we are looking for families to test it out!

If you have a child who is:

- between the ages of 3 - 17 years old

- has a clinical diagnosis of ASD

- within driving distance to Stanford University

we would love to connect with you and have you join our study.

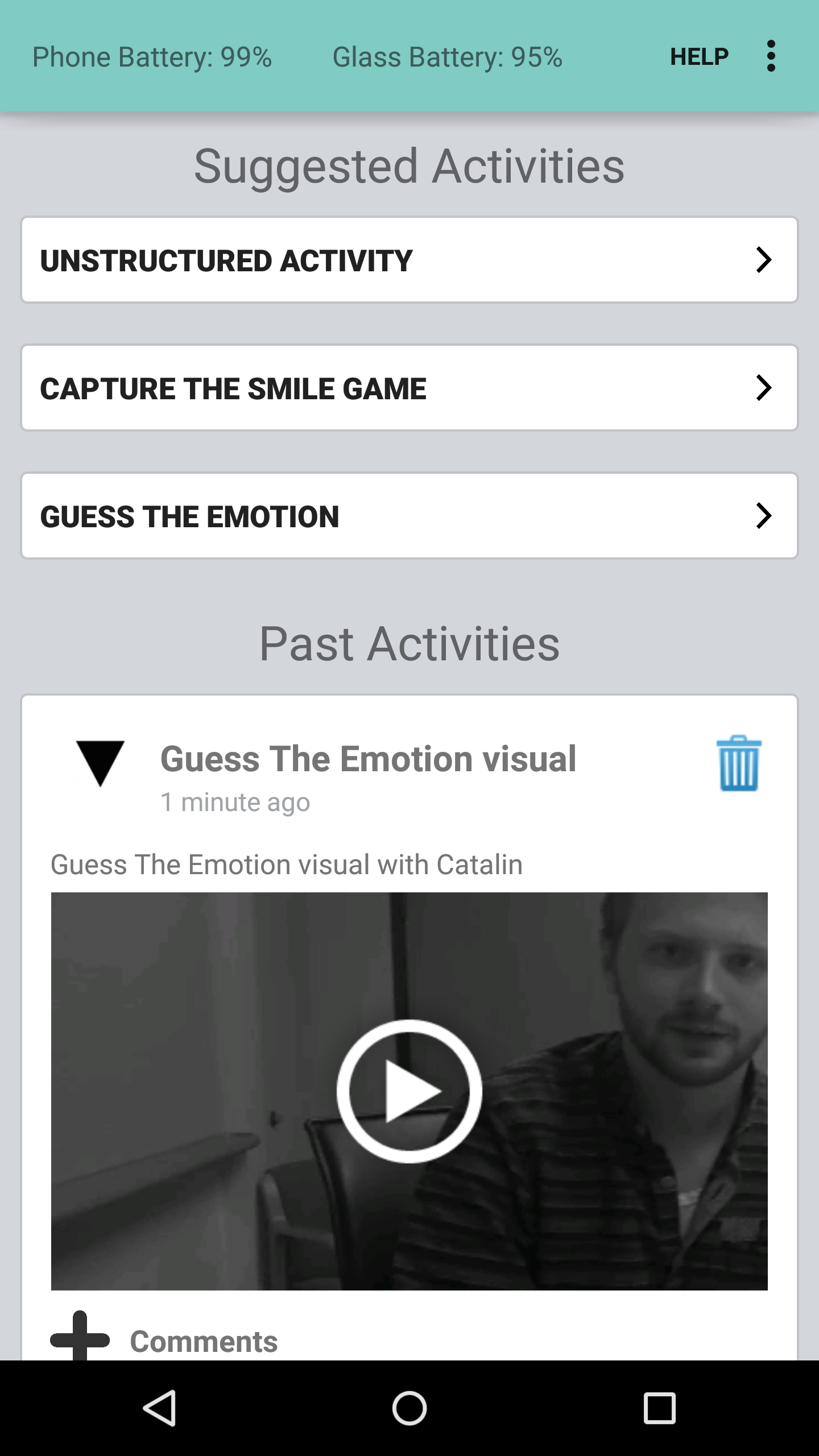

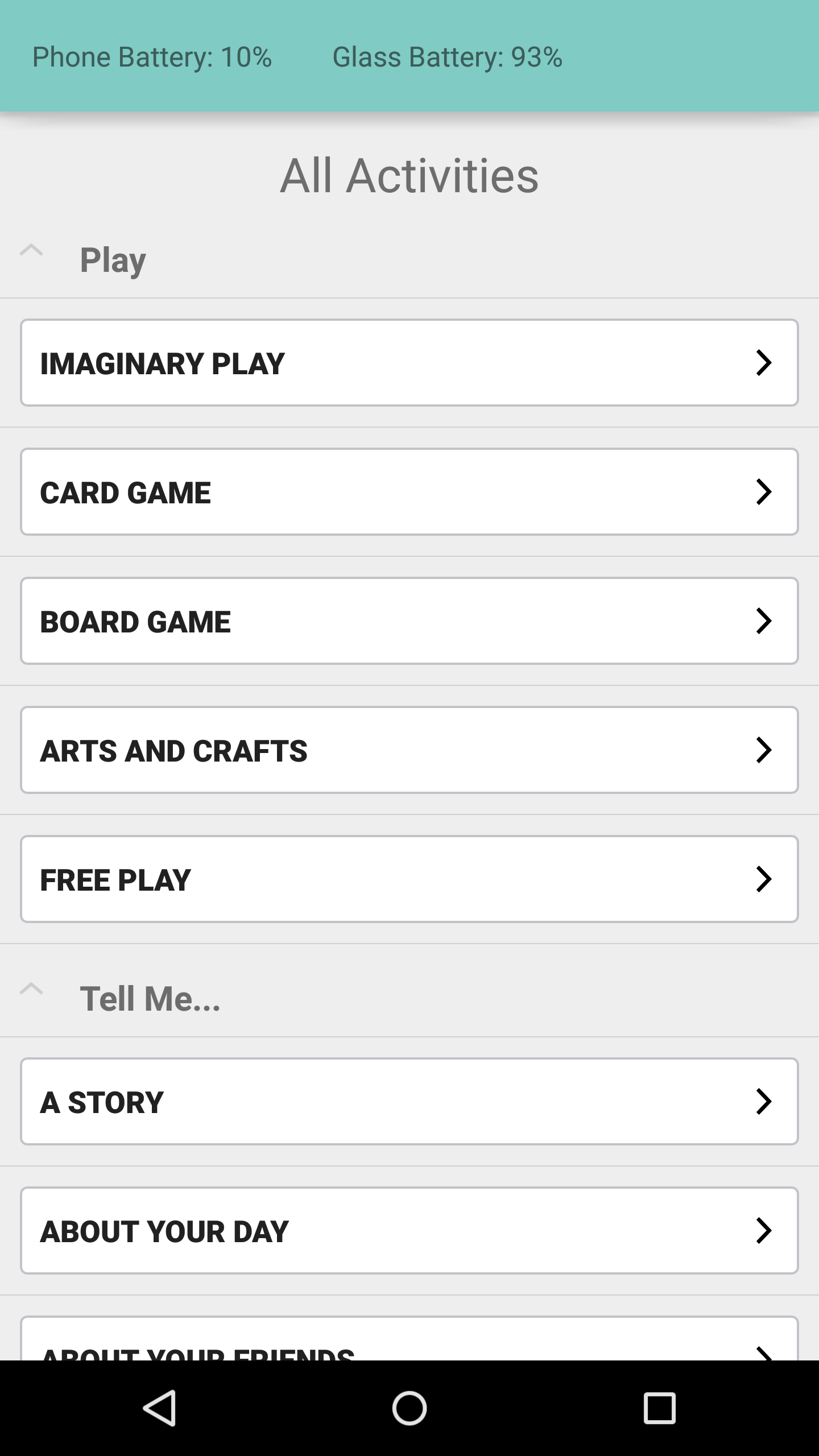

We ask families to come to Stanford for one in-person appointment to test out our new games and features. Families will try the study device (a pair of Google Glasses, an Android phone, and the study app) during the appointment for up to two hours, as well as a few other games that we are developing.

If your family does not meet these requirements, please feel free to reach out to us expressing your interest. We are always looking for families for future research projects, including a remote launch of this project, so please don’t hesitate to reach out!

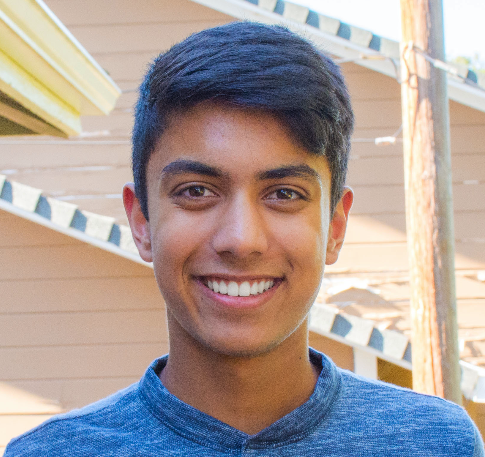

Meet some of our participants...

Autism glass in the press...

- Siri, Who Is Terry Winograd?, January 3, 2017, strategy+business

- Google Glass App Helps Kids with Autism 'See' Emotions, June 23, 2016, NBC News

- How Google Glass could help children with autism, May 12, 2016, CBS

- Can Google Glass help autistic children read faces?, June 23, 2016, Fox News Health

- Autism Glass Project kicks off, November 2, 2015, Stanford Daily

A transdisciplinary Stanford effort...

Catalin

Voss

Project Founder

Nick

Haber

Project Co-Founder & Walter V. and Idun Berry Postdoctoral Fellow

Aaron

Kline

Mobile Guru

Sebastien

Levy

Machine Learning Guru

Azar

Fazel

Machine Learning Guru

Qandeel

Tariq

Machine Learning Guru

Peter

Washington

UI/UX Engineer

Jena

Daniels

Study Manager

Jessey

Schwartz

Study Manager

Kaiti

Dunlap

Study Manager

Nate

Stockham

Audio Researcher

Anish

Nag

Research Collaborator

Jennifer

Phillips

Clinical Associate Professor in Psychiatry and Behavioral Sciences

With support from...